Stuff Michael Meeks is doing |

Older items: 2023: ( J F M A M J ), 2022: ( J F M A M J J A S O N D ), 2021, 2019, 2018, 2017, 2016, 2015, 2014, 2013, 2012, 2011, 2010, 2009, 2009, 2008, 2007, 2006, 2005, 2004, 2003, 2002, 2001, 2000, 1999, legacy html

"The main issues that we are facing today are mostly the result of the managerial style of TDF in general. Designed by committee:"As should be obvious to anyone, the solution to committees - is more committees. Apparently this new one would set a technical direction that made more sense.

...

"If I were on the Board as a dictator ... immediate freeze on all new feature requests and implementations. Then I would have put a committee together ..."

"Recognizing that the old model where everything was free has consequences, means we must explore a range of different business opportunities and alternate value exchanges,"

.odt to fill-out from

the Gas & Electricity Corporation Ltd. (doing utility

connections).

Today we release a big step in improving Collabora Online installability for home users. Collabora has typically focused on supporting our enterprise users who pay the bills: most of whom are familiar with getting certificates, configuring web server proxies, port numbers, and so on (with our help). The problem is that this has left home-users, eager to take advantage of our privacy and ease of use, with a large barrier to entry. We set about adding easy-to-setup Demo Servers for users - but of course, people want to use their own hardware and not let their documents out of their site. So - today we've released a new way to do that - using a new PHP proxying protocol and app-image bundled into a single-click installable Nextcloud app (we will be bringing this to other PHP solutions soon too). This is a quick write-up of how this works.

Ideally a home user grabs CODE as a docker image, or installs the packages on their public facing https:// webserver. Then they create their certificates for those hosts, configure their use in loolwsd.xml and/or setup an Apache or NginX reverse proxy so that the SSL unwrap & certificate magic can be done by proxying through the web-server.

This means that we end up with a nice C++ browser, talking directly

through a C kernel across the network to another C++ app: loolwsd

the web-services daemon. Sure we have an SSL transport and a websocket layered

over that, and perhaps we have an intermediate proxying/unwrapping webserver,

but life is reasonably sensible. We have a daemon that loads and persists all

documents in memory and manages and locks-down access to them. It manages low

latency, high performance, bi-directional transport - over a persistent,

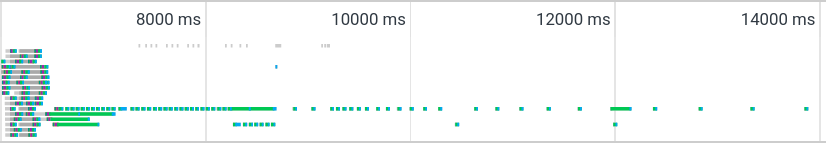

secure connection to our browsers. We even re-worked our main-loops to use

microsecond waits to avoid some silly one millisecond wasteage here and there,

here is how it looks:

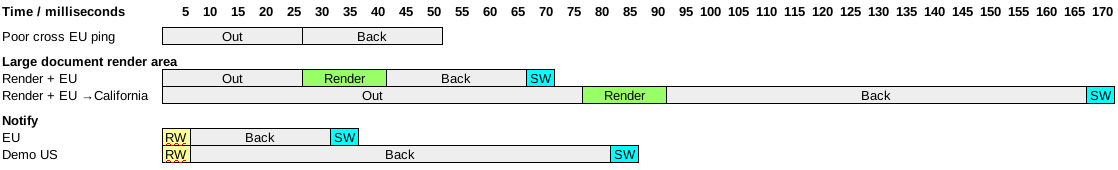

This shows a particularly poor cross-European ping (Finland to Spain) and a particularly heavy document re-rendering, as well as some S/W time to render the result in the browser. This works reasonably nicely for editing on a server in San Francisco from the EU. Push notifications are fast too.

The problem is - this world seems hard for casual users to configure.

Ideally we could setup the web server to proxy our requests nicely from the get-go, and of course Collabora Online is inevitably integrated with an already-working PHP app of some sort. So - why not re-use all of that already working PHP-goodness ?

PHP of course, has no built-in support for web-sockets for this use-case, furthermore it is expected that PHP processes are short-lived and are spawned quickly by the server - being killed after a few (say 15) seconds by default for bad behavior if they are still around.

From the Javascript side too - we don't appear to have any API we can

use to send low-latency messages across the web except the venerable XMLHttpRequest

API that launched a spasm of AJAX

and 'Web 2.0' a couple of decades ago. So - we can make asynchronous requests,

but we badly need very low latency to get interactive editing to work - so how

is that going to pan out ?

Clearly we want to use https or TLS - but negotiating a secure connection requires a handshake that is rich in round-trip latency: exactly what we don't want.

Mercifully - web browser & server types got together a long time ago to do two things: create pools of multiple connections to web servers to get faster page loads, and also to use persistent connections (keep-alive) to get many requests down the same negotiated TLS connection.

So it turns out that in fact we can avoid the TLS overhead, and also make

several parallel requests without a huge latency penalty, despite the

XMLHttpRequest API: excellent - for client connections we loose

little latency, so we can stack our own binary and text, web-socket-like

protocol on top of this.

Clearly we want to avoid our document daemon being regularly killed every

few seconds, but luckily by disowning the spawned processes this is reasonably

trivial. When we fail to connect, we can launch an app-image that can be bundled

inside our PHP app close to hand, and then get it to serve the request we want.

Initially it was thought that this should be as simple as reading

the php://input and writing it to a socket connected to loolwsd

and then reading the output back and writing it to php://output.

Unfortunately things are never quite that easy:

If you love PHP, probably you want to read the Proxy code - three hundred lines, most of which should not be necessary.

The most significant problem is that we need to get data through

Apache, into a spawned PHP process and send it on via a socket quickly.

Many default setups love to parse, and re-parse the PHP for each request,

so - when the first attempt to integrate the prototype with Nextcloud's

PHP infrastructure (allowing every app to have it's say) and so on - we

went from ~3ms to ~110ms of overhead per request. Not ideal.

Thankfully with some help, and a tweak to the NginX config- we can use

our standalone proxy.php as above; nice.

Then it is necessary to get the HTML request, its length, and

content re-constructed from what PHP gives you. This means iterating

over the parsed versions of headers that PHP has carefully parsed, to

carefully serialize them again. Then - trying to get the request body.

php://input sounds like the ideal stream to use at this

point, except that (un-mentioned in the documentation) for rfc1867

post handling it is an empty stream, and it is necessary to re-construct

the mime-like sub-elements in PHP.

After all this - we then get a beautiful clean blob back from

loolwsd containing all the headers & content we want to proxy back.

Unfortunately, PHP is not going to let you open php://output

and write that across - so, we have to parse the request, and manually

populate the headers before dropping the body in. Too helpful by half.

After doing all of this we are ~3ms older per request or more.

It would be really lovely to have a php://rawinput and

a php://rawoutput that provided the sharp tool we could

use to shoot ourselves in the foot more quickly.

Our initial implementation was concerned about reply latency - how can we get a reply back from the server as soon as it is available ? we implemented an elegant scheme to have a rotating series of four 'wait' requests which we turned over every few seconds before the web-server closed them. When a message from loolwsd needed sending we could immediately return it without waiting for an incoming message. Beautiful!

This scheme fell foul of the fact that each PHP process you have sitting there waiting, consumes a non-renewable resource - my webserver defaults to only ~10 processes; so this limited collaboration to around two users without gumming the machine up: horrible. Clearly it is vital to keep PHP transaction time low, so we need to get in and out very fast, all those nice ideas about waiting a little while for responses to be prepared before returning a message sound great but consume this limited resource of concurrent PHP transactions.

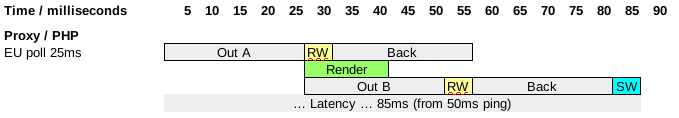

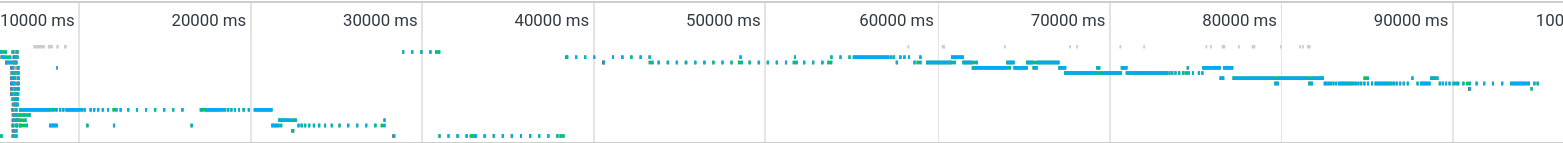

So - bad news it is necessary to do rabid polling to sustain multiple concurrent users. That is rather unfortunate. This picture shows how needing to get out of Apache/PHP fast gives us an extra polling period of latency:

We have to ping the server every ~25ms or so (this is around half our target in-continent latency). Luckily we can back-off from doing this too regularly as we notice that when we poll nothing is going or coming back. Currently we exponentially reduce our polling frequency from 25ms to every 500ms when we notice nothing is happening on the wire. That means that another collaborator might have typed something and we might only catch-up 500ms later, but of course anything we type will reset that, and show up very rapidly:

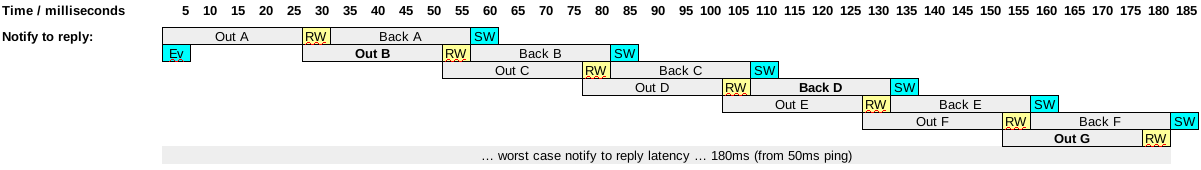

Of course, the worst case where the server needs to get information to the client to respond (and it goes without saying that our protocol is asynchronous everywhere) - is around an inter-continental latency: event 'Ev' misses the train, and is sent with B, the response missing the train 'C' and returning in Back 'D' the the browser reply missing Out 'F' and the data getting back in Out 'G':

So much for design spreadsheets - what does it look like in practice ? there is a flurry of connections at startup, and then a clear exponential back-off - we continue to tune this:

As we get into the editing session you can see that connections are re-used, and new ones are created as we go along:

Perhaps an interesting approach, this comes with a few caveats:

proxy_prefix setting in loolwsd.xml

if you want something similar.

All in all, once you have things running, it is a good idea to spend a little extra time installing a docker image / packages and configuring your web-server to do proper proxying of web-sockets.

Many thanks to Collaborans: Kendy, Ash, Muhammet, Mert & Andras, as well as Julius from Nextcloud - who took my poor quaity prototype & turned it into a product. Anyone can have a bad idea - it takes real skill and dedication to make it work beautifully; thank you. Of course, thanks too to the LibreOffice community for their suggestions & feedback. Next: we continue to work on tuning and improving latency hiding for example grouping together the large number of SVG icon images that we need to load and cache in the browser on first-load - which will be generally useful to all users. And of course we love to be able to continue investing in better integration with our partner's rich solutions.

php://input

documentation is very misleading - the input is simply not

present for some RFC1867 POSTs, spent time re-constructing

that.

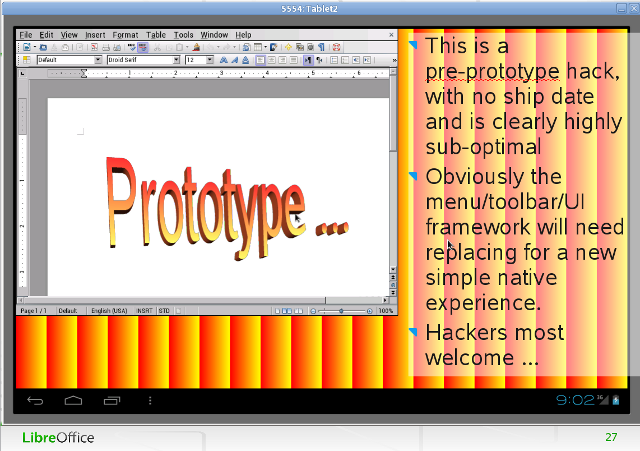

Today we ship our first supported release of LibreOffice for Android and iOS phones. Of course, there is some serious engineering work under the hood there over a long period. You can read all of the nice marketing and pretty screenshots but how did this happen ?

SUSE was a foundational supporter of LibreOffice, and it was clear that smartphones were becoming a thing, and something needed to be done here. Also Apache OpenOffice was being used (without anything being contributed back) by AdrOpen Office - which looked like 'X on Android', so we needed a gap plugging solution, and fast.

Luckily a chunk of the necessary work: cross-compiling was dual-purpose. Getting to work was part of our plan inside SUSE to build our Windows LibreOffice with MINGW under SLES. That would give us a saner & more reliable, and repeatable build-system for our problem OS: Windows.

Of course we used that to target Android as well, you can see Tor's first commit. We had a very steep learning curve; imagine having to patch the ARM assembler of your system libraries to make STL work for example.

FOSDEM as always provided a huge impetus (checkout my slides) to deliver on the ambitious "On-line and in your pocket" thing. I have hazy visions of debugging late at night in a hotel room with Kendy to get our first working screenshot there:

Interestingly the 'online' piece was (at that time) gtk-broadway based. Anyhow, while we couldn't do a huge amount of work at SUSE on Android (it was too obviously far away from our core market), we did valuable prototyping.

We also discovered that the modular architecture of LibreOffice - whereby there is a shared library for everything (even loading shared libraries), is not only a Linux / linking performance disaster-area, but also Android had a hard-coded 96 .so library loading limit; with no clear view of how many are used by the system; oh - and it is not as if dlopen fails, you just hard crash: another cute-ness of the 'droid of the time

After SUSE spun us out to form Collabora's new Productivity division - we worked hard on this problem. A key investor there was the VC funded CloudOn who worked with Collabora to build a native iOS app for iPad as an alternative to their cloud-hosted, H264 video streaming of a remote VM containing MS Office.

This investment helped Tor (once again) to bring his existing prototype iOS version into a much better shape. It also let us fund Matúš Kukan to start the epic task of re-working all UNO components entry-points to allow us to link them into a single binary, and better by splitting them, to get the linker to garbage collect any bits we didn't need.

CloudOn built a proprietary product from the LibreOffice base, that was rather slick, and then got acquired by DropBox & vanished; making funding tight.

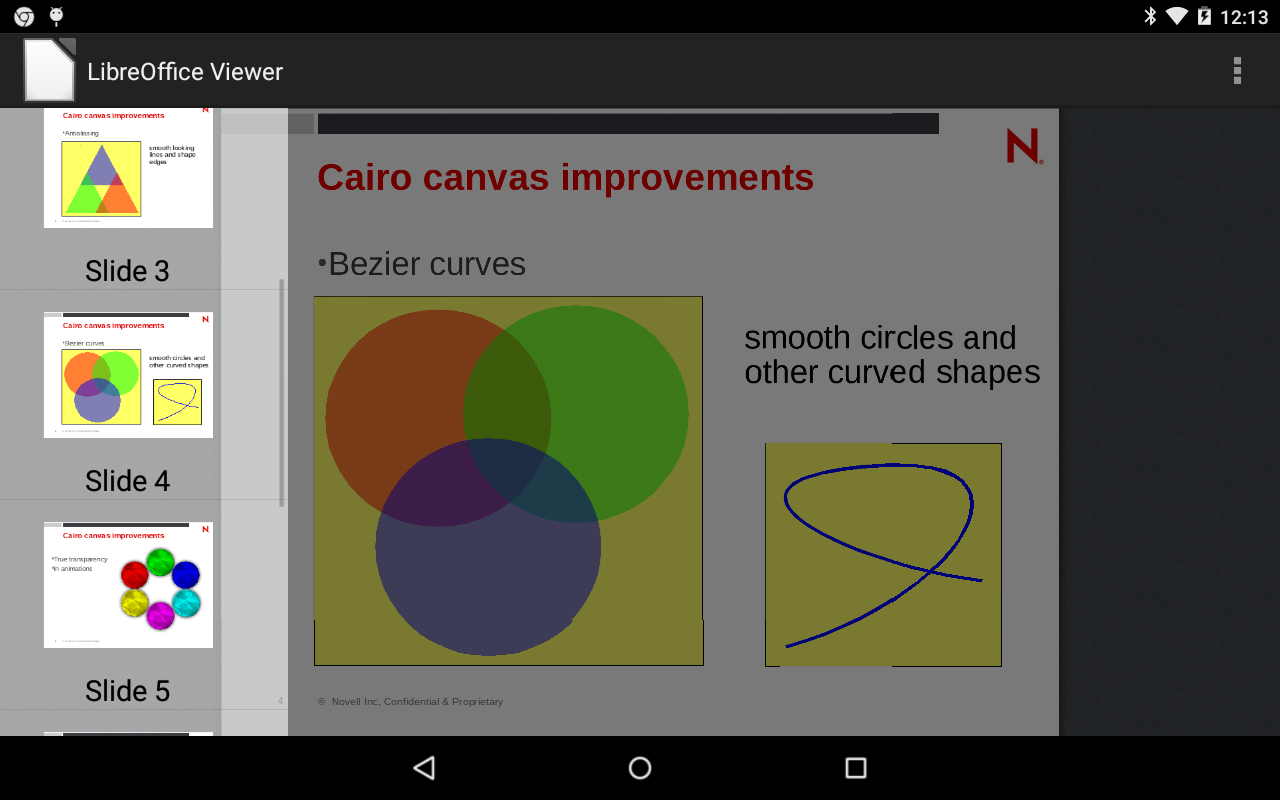

Thankfully, in 2014 we had a visionary funding from Smoose and with their help we could work on the problems again now at Collabora and drive to getting a 'real' viewer working - built on exposing the LibreOffice core through a simple shared-library interface: LibreOfficeKit.

The write-up has all manner of detailed credits there also to Kendy, Tor and for the document browser to Ian Billet's GSOC project, with Jacobo Perez (Igalia).

Fundamentally this was built on re-using the Java Fennec tiled-rendering code from Firefox for Android - Tomaz 'Quikee' Vajngel did that heavy lift.

So now we had a tiled-rendering mobile document editor for a FLOSS mobile platform - with much of the power of LibreOffice. Problem is - it was not editable, although we had done a chunk of work on editability for CloudOn in the past so we knew it could be done.

TDF posted a tender to "develop the base framework for an Android version of LibreOffice with basic editing capabilities".

Collabora won part of that for the core work for Eur 84k (an important 'angel' investment into tiled editing, although well under 5% of the total investment here, valuable - thanks to TDF's Donors). Igalia won the piece to implement an ownCloud integration. The intention was to build basic functionality that could be built on by the community, or by using a crowd-funding campaign.

We released a LibreOffice for Android in April 2015 LibreOffice for Android: prototype editor preview, and this was taken over by TDF in late May 2015.

For the next five years, the Android app has been maintained by the community, with editing as an experimental / advanced feature. Christian Lohmaier did some excellent work of fighting bit-rot, and keeping the viewer working for TDF.

Collabora invested the time to mentor several GSOC students doing smaller feature pieces for the viewer like Gautam Prajapati, and reviewed patches & fixed things here and there, and evangelized.

TDF published calls for people to volunteer and participate - some of them incredibly pretty, recently in 2018:

Unfortunately, the net result of all this hard work, didn't attract significant numbers of people to develop and sustain the Android app. This continued not to deliver a product that people wanted to use. In recent times, TDF marketing suggested removing it, since it hadn't been updated for nearly two years with lots of negative reviews, and this was done.

Meanwhile, while the Java based Android solution was not going anywhere fast, in parallel (from 2015) - Collabora and Icewarp started Working on an Open Source alternative to Apps, Office 365.

Building on the foundational work above, and extending this hugely, ultimately releasing COllabora OnLine COOL 1.0 and iterating this rapidly for our customers.

Many years of extraordinarily hard work primarily from Collabora's growing team of engineers working on Collabora Online - improving interactivity, latency, responsiveness, usability etc. gave us a growing number of happy users in PC web browsers.

Then in early 2018, having jointly sold a (clearly labelled) 'Online' solution for use with iPads, using a remote browser based responsive UI that we had been working hard on, it was decided that, independent of the clarity of the product description, contract etc. this should work off-line.

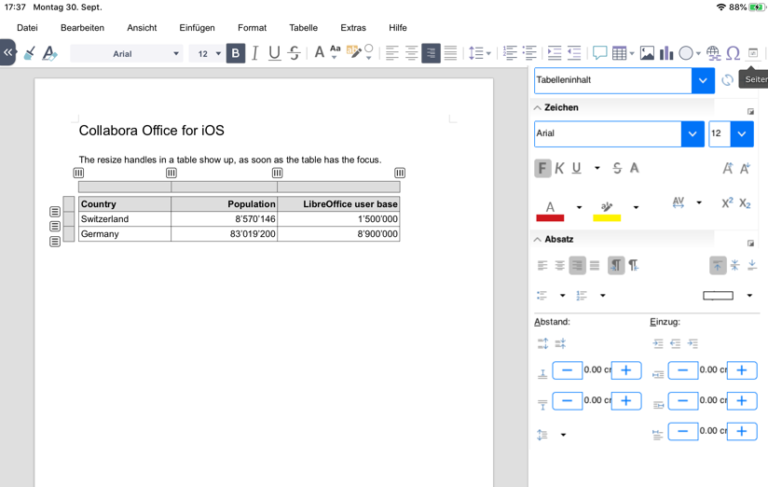

Thus started an incredible sprint to deliver a great iPad app. Clearly it was not possible to reuse the old Fennec approach, so starting from scratch we re-used the core code, and re-purposed the native browser / web-view to talk to a local Online instance directly. In addition - polished lots of native touch interactions.

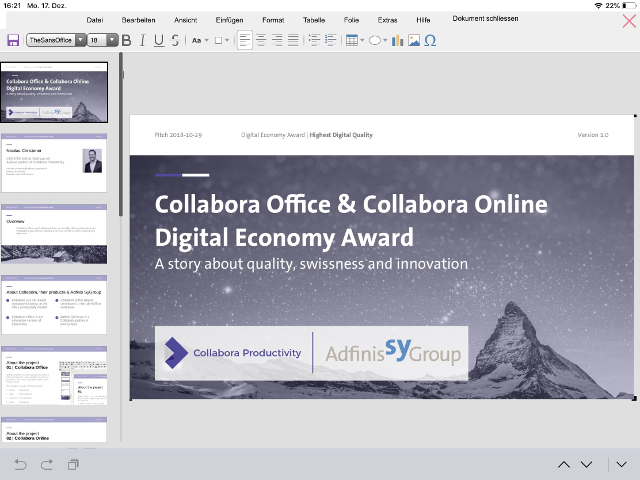

We announced this with Adfinis in late December 2018.

And provided a lot more lush functionality, including custom widget theming, bringing the sidebar to mobile, and more in late 2019.

In a remarkable deja-vu, a joint customer of Nextcloud & Collabora also procured an on-line product, and wanted an off-line native editor, this time on Android. Thus started another amazing sprint.

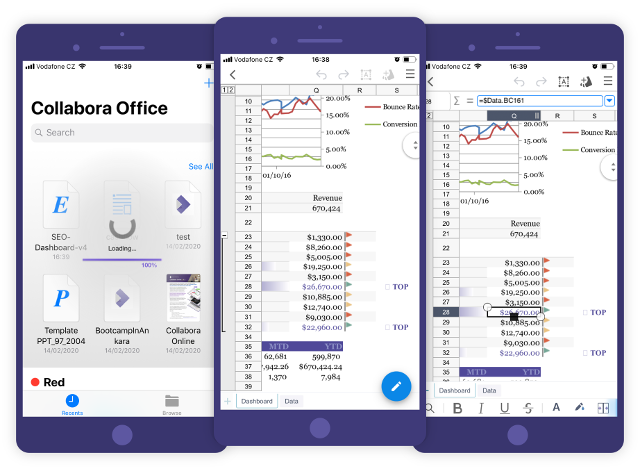

Luckily, Kendy had a prototype to bring Tor's iOS work to Android from a Collabora Hackweek (allowing our engineers to work on anything LibreOffice-related they want for a week) from the early 2019 and then Florin Ciornei ported much of the functionality from the fennec-based app to this one.

During his Google Summer of Code, Kaishu Sahu then finished the porting and added more functionality - like inserting local images or printing.

Throught the year, many people from the Collabora team have improved various aspects of the Android app, let me list them in the alphabetical order:

Our responsive UI from Feb 2019 provided lots of our rich functionality including dialogs on mobile - although the UX there was not ideal:

It was then decided that having all this powerful functionality made the app hard to use with one hand, and that a more limited set of features would make for a more compelling single-touch UI. Again, we changed direction and worked hard on that with a first beta release in late 2019.

Another thing that we created was the expandable bottom palette of simple, frequently used tools created by Szymon Klos, which effectively re-purposes the LibreOffice sidebar tools for mobile devices (with some tweaks).

Further work here targetted the native platform's storage provider interface on Android, which allows much richer, built-in integration with lots of providers: ownCloud, Nextcloud, Seafile - even OneDrive or DropBox if unavoidable. This retired Igalia's native Java integration, that pre-dated those APIs.

All of this wrapped together, with thirty-five Collabora committers contributing to Online over many years with 10100, of 10500 commits brings us to today's release, and we're excited about it:

Of course, this is just the start, we will continue to build on and receive feedback from our paying customers, and direction as to where to improve and fix the product over time; stay tuned.

Success has a thousand parents, but there is great credit to those named above, and many more who have enabled the evolution of LibreOffice to the full set of platforms. In particularly, StarDivision and Sun laid the foundations and fought the good fight for many years. After them, SUSE, Collabora, CloudOn, Smoose, TDF, Adfinis-Sygroup, Google (via GSOC), and of course the whole LibreOffice Community on whose work we build: thank you !

We finally have a solution in every space: phones, tablets, in your browser, and native on PC. Now we just need to incrementally improve everything, everywhere.

All of the code is, of course open now, and should be available in LibreOffice 7, though we have some of our own branding & theming. Please do check it out on Google Play or the iOS App store.

While thanking everyone who contributed, it is important to note that it could be you ! How can you do that ? well - lots of ways. Why not checkout the Android README and get stuck in ? It's easy to develop and debug some of the features on PC in Chrome's web-view too if you want to avoid the development cycle of Android Studio & up-loading. If you're less of a developer there are still lots of ways to get involved with LibreOffice.

std::vector foo(lotsOfPixels,0); is

on Android - more than rendering a document. Adapted mobile

threading concurrency to the hardware.

My content in this blog and associated images / data under

images/ and data/ directories are (usually)

created by me and (unless obviously labelled otherwise) are licensed under

the public domain, and/or if that doesn't float your boat a CC0

license. I encourage linking back (of course) to help people decide for

themselves, in context, in the battle for ideas, and I love fixes /

improvements / corrections by private mail.

In case it's not painfully obvious: the reflections reflected here are my own; mine, all mine ! and don't reflect the views of Collabora, SUSE, Novell, The Document Foundation, Spaghetti Hurlers (International), or anyone else. It's also important to realise that I'm not in on the Swedish Conspiracy. Occasionally people ask for formal photos for conferences or fun.

Michael Meeks (michael.meeks@collabora.com)